Two finance leaders from Aleph and YipitData on the mindset shifts, rising AI costs, and practical moves CFOs are making right now.

A year ago, most CFOs were skeptical about AI’s usefulness. Today, they’re wondering what to do about it: what to budget, how to explain rising costs to the board, and how to steer a team that’s spent a decade building in Excel toward something that changes every couple of weeks.

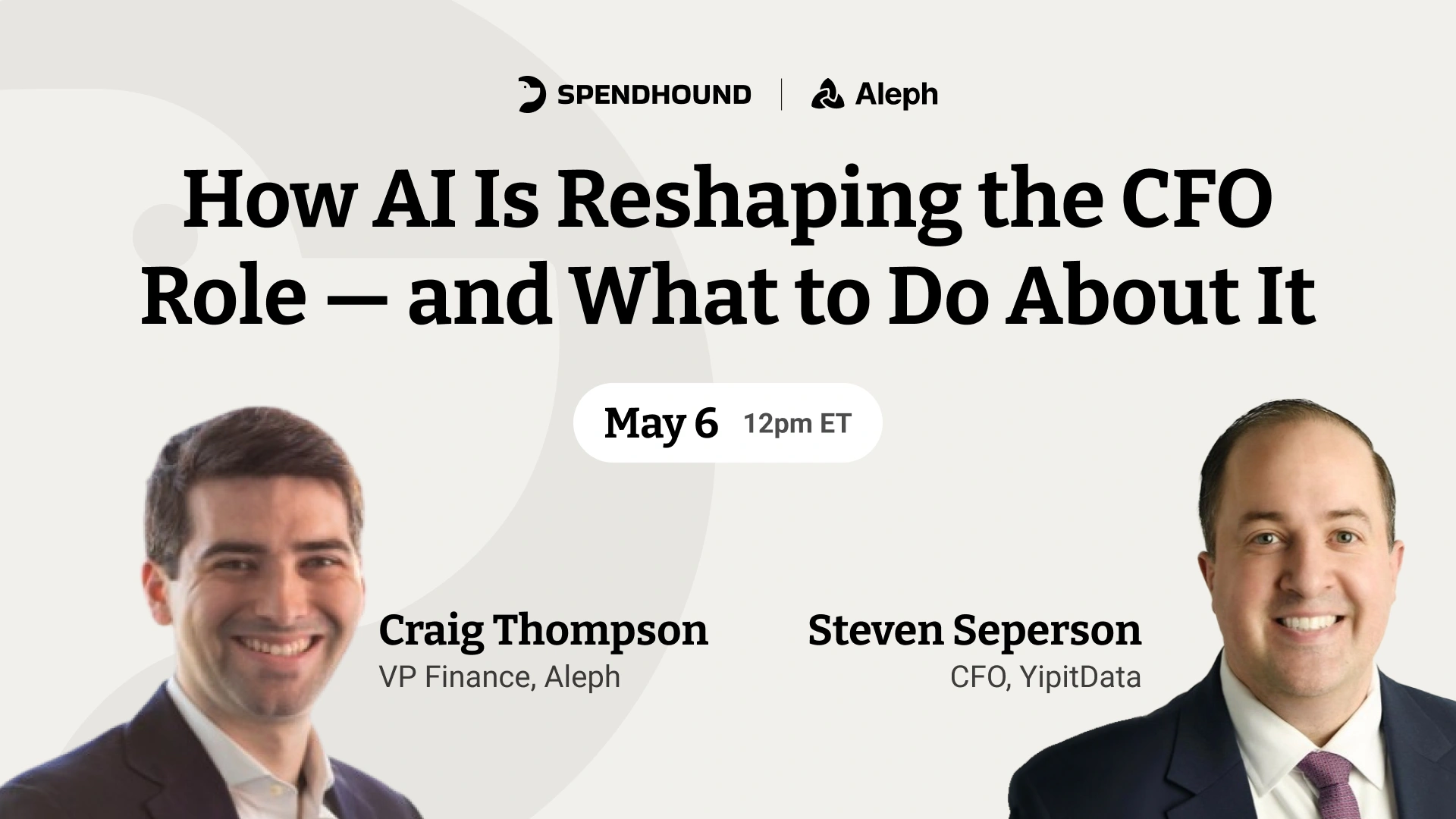

Those are the questions Steven Seperson, CFO of SpendHound and YipitData, brought to Aleph’s latest fireside session. With Craig Thompson, VP of Finance at Aleph, the two spent nearly an hour working through what AI adoption actually looks like inside a finance function.

The session covered where AI is generating ROI today, how to frame rising AI spend for the board, whether the annual budget cycle is dying, and what it means to manage a team when AI fluency becomes table stakes for hiring.

Top takeaways:

Craig opened with an observation: finance people are, in some ways, attached to the hard way of doing things. Late nights, earned insights, sweat equity, spreadsheet mastery. There’s an identity built around that kind of work.

That attachment, he suggested, is part of why AI adoption in finance has lagged behind other functions. It’s not that finance leaders don’t understand the tools (or their value). It’s that the tools challenge a belief that difficulty was part of the point.

Steven’s take was more practical, offering that the question isn’t whether to embrace AI, but instead where you are on your journey and what your next step looks like:

“There was definitely skepticism [around AI] at first. You’d go into ChatGPT, start asking questions, and it’d work with you more as an assistant. But now it’s evolving.” — Steven Seperson

He framed AI’s adoption curve as genuinely nonlinear: skepticism, then curiosity, then the moment where a use case clicks and your mindset shifts. The teams moving the fastest, in his view, are the ones that created structural pressure to experiment.

When someone asked about a reasonable AI budget for a 200-person company, Craig’s argument was clear: there’s no world where a company should be less profitable because of AI. That means AI spend isn’t a new line item; it’s a reallocation of budget that already exists.

“Your knowledge budget used to be 100% headcount because there was nothing else producing knowledge besides people.” — Craig Thompson

When you hire a new analyst, you expect a training ramp before you see returns. AI investment deserves the same framing: an experimentation phase with higher costs up front, followed by a productivity gain that shows up in the numbers. Operating margin, EBITDA, CAC payback — whatever your core metrics are, AI spend has to tie back to them.

Steven added that the calculus gets more complicated when AI is embedded in the product itself (i.e., part of what’s generating revenue). In that case, AI costs may scale with the business in a way that looks different from traditional OPEX, and your pricing has to evolve alongside it.

“There’s a world where you might want to lean into AI as a high growth company, and it could even further accelerate your strategy.” — Steven Seperson

Notably, neither Steven nor Craig were willing to endorse inventing new KPIs to justify AI spend. The frameworks that have held for decades — operating leverage, EBITDA, growth efficiency — still apply. The work is fitting AI investment into those frameworks.

Craig identified two categories where he’s seeing returns in production environments today.

The first: connecting the dots. Getting relevant context to the right person at the right time (e.g., a new account exec working a deal with prior history; a marketing team researching customer use cases). AI has made institutional knowledge accessible in a way that used to require a lot more bothering.

The second: last-mile delivery of manual tasks. He was specific about Claude Cowork, which he’d been using for six weeks at the time. The ability to have an agent execute tasks inside Slack — updating Stripe directly from a conversation, reviewing discrepancies across systems — has been what he described as a “power law” change in what a small finance team can get done.

For example, Aleph’s RevOps team built an MSA reviewer using a Claude skill. When an account exec receives red lines on a contract, they can now call the skill directly inside Slack. By the time legal is ready to review, the markup is already prepared.

Steven agreed. The goal, in his opinion, is to stop letting information production slow you down:

“The bottleneck should be how you’re making decisions, what judgment you have, and how you’re informing both of those things — not producing the information.” — Steven Seperson

But there’s a prerequisite: clean data architecture. All of this — AI agents, automated reporting, real-time variance analysis — runs on a solid data foundation. Without it, you’ll find AI tools produce output you can’t trust.

When pushed on how much AI-driven work is autonomous versus requiring human review, Steven’s answer was direct: the vast majority still involves a human in the loop. And it should.

“Despite agentic AI capabilities, you’re still responsible for the output. Even if you’ve automated completely, there’s still something breaking when someone’s not looking.” — Steven Seperson

Craig’s framing was similar, and his governance point was worth noting. It’s not that autonomy is dangerous; it’s that every workflow eventually reports up to a person. The chain has a human at the top, even when agents are doing meaningful work inside it. What’s changed is the depth of the chain (i.e., how many steps happen before a human sees the output).

On the question of data governance specifically (who has access to what, how data moves between agent systems, etc.) both Steven and Craig were clear that this is not something finance can outsource to IT or for legal to figure out.

Finance will be a key pillar of AI governance, in Steven’s view, precisely because finance owns the data most agents depend on.

Someone asked whether the traditional annual budget cycle is dying. Steven’s answer was nuanced.

His position: the annual budget isn’t going away, but the pace of reforecasting probably has to accelerate. Rolling forecasts become table stakes. The question then becomes whether the insights you get from monthly reforecasting justify the time it takes to produce them.

More interestingly from Craig: AI is going to compress the time it takes to run a full budgeting cycle. A three-month process becomes a two-week process. When that happens, the math on how often to reforecast changes entirely.

“If I look at the companies that are doing true annual budgeting on a quarterly basis today, it’s a number that rounds to zero. In twelve months, I think it’ll probably be 10 to 20 percent.” — Craig Thompson

Steven’s point on long-range planning was a useful addition: AI is forcing finance to think more seriously about years two, three, four, and five. Short-term to long-term decisions need to stay in check to show operating leverage over time — a harder job when technology is moving as fast as it is now.

Where to start if you’re early in your adoption

Steven’s advice for CFOs who haven’t yet started (or have started but feel like they’re moving slowly): start with the smallest possible useful thing.

“If you’re truly starting at zero, start with very simple things to get familiar. Whether it be improving an email or a report you send every Monday from Excel where the team pulls three data sources together and spits it out.’” – Steven Seperson

The logic is that small wins change the team’s belief about what’s possible. Once the training wheels come off, the push to find more complex use cases tends to happen organically.

Craig’s suggestion for teams that haven’t started yet: block a day, get people in a room, and build something together. The mental hurdle from zero to one is harder than people expect. Shared practice, in his experience, is what gets teams past it.

Both Steven and Craig agreed in their closing: the best way to accelerate your own thinking is to talk to people who are on the same journey. Every organization is approaching AI differently, but the underlying problems are the same. The people figuring it out fastest are the ones comparing notes.

Finance teams that can see everything they're spending across tools are better positioned to make the cost-benefit calls that AI adoption keeps forcing. If you're managing rising spend without full visibility into what's driving it, see how SpendHound can help.

Book a demo below and we'll get you set up with our team.